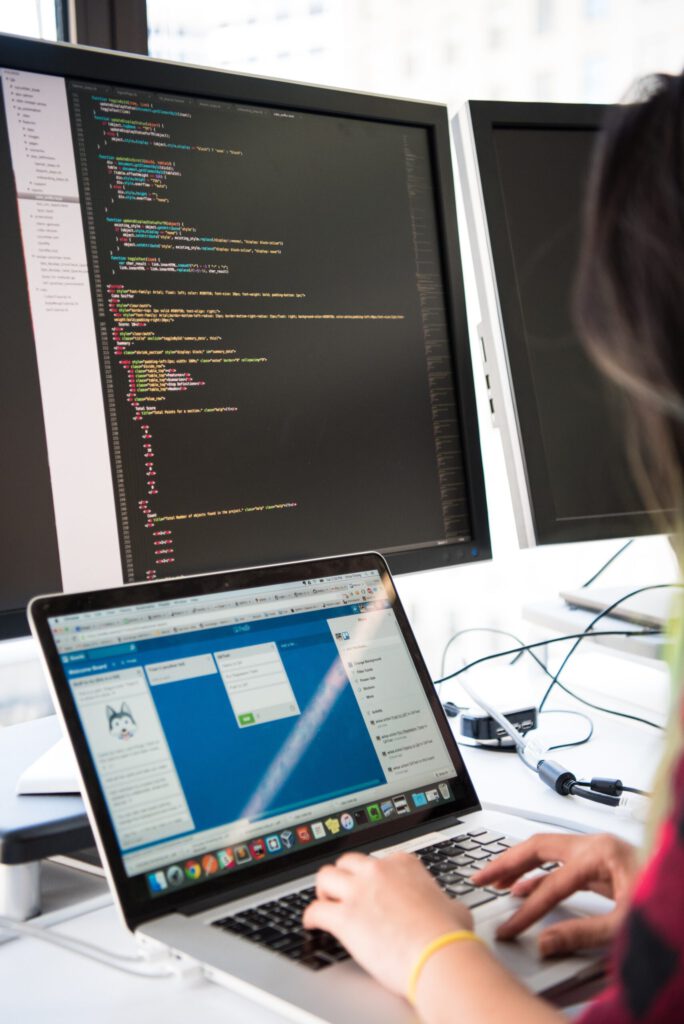

Data has become the core of every business strategy and market research. Everything ranging from a startup to a Fortune 500 company requires data to develop their campaigns and other critical business decisions.

The internet has more data than you can imagine, and it can get tough to find relevant information from the noise. You have to analyze and access vast amounts of data to find so.

It is like searching for a needle from a haystack. To avoid the effort of going through hundreds of web pages manually, you can use web scraping. Web scraping is a method of extracting data in bulk from various digital platforms.

What is Web Scraping?

Web Scraping involves automating the process of extracting data in a fast and efficient way. It can help you to pull large amounts of data with ease forgoing all the manual labor. You can even extract data that you cannot copy manually, like uncopyable text.

You can use web scraping for various purposes such as Competitor Price Monitoring, Monitoring MAP Compliance, Fetching Product Descriptions, Real-Time Analytics, Data-driven Marketing, Content Marketing, Lead Generation, Competitive Analysis, and SEO Monitoring.

Web Scraping has a use for every industry and has become a must-have tool for many businesses. It has made researching for new content and keeping up-to-date with the latest market trends easier.

However, there are a few risks involved with using web scraping. Web Scraping uses automated tools such as scrapers and bots to pass heavy traffic to a website. This traffic can raise a red flag to the website, blocking your IP address from further browsing.

Additionally, most websites have anti-scraping tools to detect bot activity and feed the bot with incorrect data. This can cost you money and time as the data you have gathered might be irrelevant or inaccurate. You can follow these three ways to improve your web scraping rate and avoid getting blocked.

Use residential proxies

Residential proxies act as a mask over your residential IP address. An IP address is like your digital identity. When you use the internet, every website you surf can check your IP address and find your residential address using it. This makes you vulnerable to several cyber threats as your location is revealed to several servers.

When you are using a bot or a crawler for web scraping on a website, it can mark you as a defaulter and block your activities. To avoid this from happening, you should use residential proxies to rotate your IP address in short intervals to avoid being detected.

Residential proxies are a great tool to scrape the web faster as it will ensure that websites do not block your IP addresses or feed you incorrect data. These proxies are also helpful in accessing geo-restricted websites and creating a secure connection. You can visit websites blocked in your country and scrape data for your various needs.

Use a headless browser like a puppeteer to mimic real-user behavior.

Using a proxy in puppeteer is another great tactic to improve your web scraping capabilities. A puppeteer can mimic real-user behavior. This will prevent websites from flagging you as a bot. It can also control the speed of scraping to a human level with proper intervals and breaks to act like a human being going through the website.

A proxy in puppeteer can help you create screenshots and PDFs of web pages. You can also generate pre-rendered content by scraping a Single-Page Application. Additionally, a puppeteer can perform UI testing, submission automation, keyboard input, and other similar tasks with a proxy in puppeteer.

You can also hide your original access location or open a geo-restricted website using it. It also increases the speed of common requests, which can help you scrape data faster.

Rotate between the most common user agents

A user agent is a thread of data your browser sends to the website it is visiting. The string of data contains information like operating system, type of application, software version, etc. Every website stores this information to optimize the viewing experience for you. Most of the websites usually block requests that do not have a valid user agent.

If you use a scraper with a single user agent, that website can recognize your IP even if you are using proxies. To avoid this, you should create a pool of user agents and rotate between them to avoid being traced.

While rotating your user agents, you must add missing headers as an anti-scraping tool can identify the user agent without a title as a bot. To get the best results, you should rotate the full set of headers and the user agents.

Conclusion

Web Scraping is an excellent tool for gathering data at a fast speed with minimal effort. Every business strategy relies mainly on staying up-to-date with recent market trends. Web scraping can help you gather this data on your computer quickly. However, to get the most out of it, remember to use residential proxies, a puppeteer, and rotate between common user agents to maximize your outputs.

Story By Efrat Vulfsons